Hardware acceleration leverages specialized processors to boost compute efficiency, energy use, and sustained performance. GPUs, TPUs, and domain-specific cards embody distinct trade-offs in concurrency, memory hierarchy, and interconnects, often shifting bottlenecks toward data movement. Deployment relies on workload profiling and scalable, portable architectures to avoid vendor lock-in. The promise is clear, but the practical path—balancing portability, cost, and performance—remains nuanced and invites deeper examination.

What Is Hardware Acceleration and Why It Matters

Hardware acceleration refers to the use of specialized hardware components to perform computationally intensive tasks more efficiently than a general-purpose CPU alone.

The analysis emphasizes strategic gains: energy efficiency and robust thermal design, enabling sustained performance under load.

It also highlights software portability through abstraction layers, while hardware abstraction decouples software from implementation details, guiding disciplined optimization and scalable, freedom-loving architectural choices.

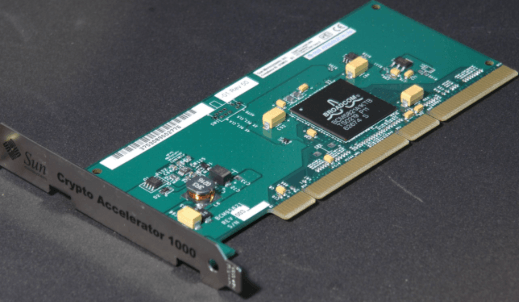

Core Accelerators: GPUs, TPUs, and Domain-Specific Cards

GPU, TPU, and domain-specific cards represent the core accelerators that bridge software intent and hardware throughput, each with distinct design emphases and trade-offs. These architectures diverge in concurrency models, memory hierarchies, and interconnects, shaping performance envelopes.

GPU memory enables massive parallel data access, while TPU interconnects optimize streaming tensor workloads, guiding strategic choices for freedom-loving organizations pursuing scalable, principled acceleration.

How Acceleration Impacts Real-World Workloads

Recent real-world workloads reveal that acceleration reshapes performance by shifting bottlenecks from compute to memory bandwidth, data movement, and software orchestration. This dynamic elevates the importance of data transfer efficiency and disciplined workload partitioning, as systems balance parallelism with coordination overhead. Analysts evaluate trade-offs between latency, throughput, and energy, guiding strategic decisions that preserve flexibility while unlocking scalable performance gains.

How to Choose and Deploy Accelerators for Your Stack

Choosing and deploying accelerators requires a structured view that connects workload characteristics to architectural options and deployment realities. Decisions hinge on workload profiling, cost curves, and scalability paths, balancing vendor ecosystems with interoperability. A rigorous framework evaluates data center economics and energy efficiency, aligning procurement with long-term reliability, maintenance costs, and tilting toward flexible, composable architectures that preserve freedom to evolve without lock-in.

See also: Hardware Advancements Driving Innovation

Frequently Asked Questions

How Does Hardware Acceleration Affect Energy Consumption in Edge Devices?

Hardware acceleration reduces energy consumption on edge devices by shifting workloads to specialized units, enabling energy profiling and dynamic scaling, while preserving performance; thermal balance improves due to lower peak power, though gains depend on workload and architectural efficiency.

Can Accelerators Interpolate Non-Accelerated Workloads Efficiently?

Accelerators struggle to interpolate non-accelerated workloads efficiently, revealing interoperability challenges and abstraction overhead. The analysis indicates strategic potential exists only when systems normalize interfaces, manage heterogeneity, and minimize latency, enabling flexible, freedom-oriented experimentation amid performance trade-offs.

What Are the Hidden Costs of Maintaining Accelerator Ecosystems?

Vendor lock and opaque integration inflate total cost of ownership, while resource fragmentation erodes predictability; toolchain brittleness hampers adaptation, undermining strategic freedom as ecosystems demand rigid standards and continual, invasive migrations.

How Do Accelerators Impact Software Licensing Models?

Accelerators reshape governance of value, as licensing shifts toward hardware-anchored terms and usage metrics. Accelerator licensing drives software monetization strategies, rewarding efficiency while constraining portability, with strategic risk managed through modular contracts and transparent cost modeling.

Are There Security Risks Unique to Hardware Acceleration?

Security risks exist, including potential hardware backdoors, as specialized accelerators introduce trusted-lookup and supply-chain vulnerabilities. A rigorous analysis shows strategic exposure: covert implants, malignant firmware updates, and side-channel leaks demand stringent verification, continuous monitoring, and freedom-respecting, transparent governance.

Conclusion

In summary, the theory that hardware acceleration is a universal fix is overly optimistic. The truth lies in strategic alignment: accelerators must harmonize with workload characteristics, memory hierarchies, and data movement costs. When profiled and ported across interoperable frameworks, GPUs, TPUs, and domain-specific cards yield sustained gains with disciplined optimization. Conversely, misalignment amplifies bottlenecks. A rigorous, portfolio-minded deployment—profiling, benchmarking, and gradual integration—delivers predictable performance, energy efficiency, and resilience, rather than vendor lock-in or ephemeral speedups.